In a male-dominated arena, Dr Maryam Mehrnezhad’s Lab is providing the evidence backed research that challenges assumptions and encourages technology and cybersecurity to be made inclusive in design and in practice.

We are surrounded by technology. We live in an interconnected, digital world - from our smart home devices and the apps we access on the smartphone that’s never far away, to the online world we all use for work, entertainment, and managing every-day life. But this expanding network comes with an increasing burden of risk. If the security behind these systems is compromised, what data could we lose and how would our privacy be compromised?

And what if your experience does not align with the ‘default user’ technology is most often designed for – the young, white, able-bodied male?

Ensuring technology is safe and secure for every user is the job of systems security researcher, Dr Maryam Mehrnezhad.

Rethinking who technology is built for

Maryam is a white hat or ethical hacker, investigating cyber safety, security and privacy topics, what many in the cybersecurity industry consider ‘niche’ markets.

Her research concentrates on technology used by women, people living with disabilities, and domestic abuse victims and survivors. By investigating weaknesses in the security systems used in such technology — and often then working with the creators of those technologies to fix issues — Maryam’s research works for the social good, making the online world a safer space for those most at risk.

She leads the Usable Security and Privacy Lab, an international team of researchers that makes tech more accessible and focuses on those groups often overlooked in systems security. By combining deep technical expertise with social science perspectives, and a feminist computing lens, they have uncovered security issues and privacy concerns affecting often the most vulnerable in society.

“Our research lab is a practical place. We’re interested in solving real world problems, not just working out theoretically perfect answers.”

She is one of the very few female system security researchers in the ~35-year history of the Information Security Group at Royal Holloway and approaches the topic through a different lens to most in her field.

Three pillars of research

Central to the lab’s investigations are currently three core pillars around which the research is focused.

1. FemTech and cybersecurity

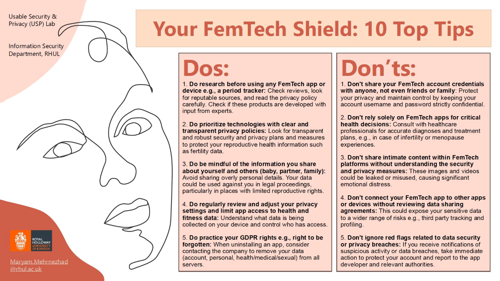

FemTech is technology aimed mainly at women, a fast-growing market already serving millions of people. From fertility trackers to menopause apps to sexual health tools, these technologies handle highly sensitive data yet often lack fundamental protections or transparency for users.

Maryam’s team has uncovered hidden privacy concerns in FemTech platforms, including cases where intimate data about fertility or menstrual cycles could be shared with third parties without users’ knowledge. Far from being a “niche” area, she argues these technologies affect half the population directly and the entire population indirectly.

Her research has driven national and international media coverage, sparking wider conversations about how women’s data is collected, used and protected.

New study looks at hidden privacy concerns of menopause tech

2. Tech abusability and domestic safety

Digital abuse is a growing concern in domestic violence cases. Abusers can track a victim’s location through spyware on their smartphone, manipulate smart-home devices like temperature settings to control behaviour, or compromise their victim’s privacy through hacking apps.

Working with Surrey Domestic Abuse Partnership (SDAP) - a collection of four charities in the UK, Maryam’s lab developed the Tech Abuse Handbook. This is a guide which empowers victims and survivors of domestic tech abuse, helping them to understand and prevent digital coercion. During the course of the project in 2025, the research team had multiple meetings with frontline support workers, hearing anonymised real world cases and co designing clear, accessible guidance.

“It was rewarding but emotionally challenging to hear stories of tech abuse in domestic settings and see how current UK laws fail to protect victims.”

Providing useful guidance and security tips, the guidebook has already been released and is being used by the Surrey Domestic Abuse Partnership (NSDAS). It’s also being translated into five additional languages to have an even wider impact.

New domestic tech abuse handbook launched to help victims

3. Accessibility by design

Maryam and her team also investigate technologies designed specifically to support accessibility. These tools help people with disabilities access digital content without it having to be delivered in alternative formats or through using additional costly or bulky device. Examples include text-to-speech, captioning, screen-readers and voice-activated systems, such as a smart Internet of Things (IoT) cane.

Because many of these technologies are often developed from charity-led projects using open-source software, they never undergo robust security testing, which in some cases might expose their vulnerable users to the possibility of a security breach. Maryam’s team works with these developers, patiently building trust with the companies behind the technology, helping them fix the problems before they publish any details of the flaws.

“We don’t compromise on accessibility,” says Maryam. “We treat accessibility as a non-negotiable security requirement.”

Research Article: AXECC: Benchmarking the Privacy and Accessibility Impact of Browser Extensions

Providing security without causing harm

Maryam and her team practise what’s known as ‘ethical-hacking’ in cybersecurity. They isolate the technology in what is known as a ‘sandbox environment’, so as not to affect general users when carrying out their tests and hacks on systems. Once a security flaw is identified, they follow an established procedure of responsible disclosure, first approaching the companies behind the technology so the issue can be fixed and systems made secure before the team publish any details of their research.

Often working under non-disclosure agreements, Maryam has collaborated with major industry partners including Apple, Google, Screen Reader companies, Health and Accessibility companies (e.g., SPD; the makers of the Clearblue pregnancy/fertility/menopause tests), as well as charities and smaller organisations that often don’t have major financial backing. Her research has contributed to changes in international standards for the W3C sensor specifications that are used for the internet and prompted security fixes in browsers and operating systems, including Safari (iOS 9.3) and Firefox 46.

Alongside industry engagement Maryam is keen to raise public awareness. Her work regularly features in national and international media, and she encourages her team to communicate their research through workshops, podcasts and public events, helping people better understand digital risks and how they can protect themselves.

Expanding the reach of security research

Several of Maryam’s Lab projects demonstrate how research can reach far beyond an academic focus.

Detecting passwords from the sound of keys being pressed

One standout study explored whether AI could be used to work out passwords from the sound keys make as they are pressed while typing, for example through a Zoom call. Recording the sounds made by keyboard keystrokes and using that data in a machine learning system, the researchers demonstrated that accurate passwords could be identified between 93% and 95% of the time, depending on the type of recording made.

Since publication, the study has attracted significant public attention and is ranked among the top 5% of research outputs tracked by Altmetric – a tool that measures how often research is discussed beyond traditional scholarly arenas.

Study suggests that AI can detect your password from the sound of keys being pressed

Pet apps leaking data

Another project investigated around 50 pet care apps downloaded by millions of UK users. It revealed that only three complied with GDPR, while many others shared user data without consent or lacked basic security features.

The team alerted the companies involved and supported fixes before publishing their results. The findings received national media coverage and helped raise public awareness that “free” services often come at the cost of personal data. Over time, this project has shaped how users assess the privacy of even the simplest technologies.

Are our pets leaking information about us

CyFer Exhibition

The team have also designed an immersive art exhibition, ‘CyFer’, which explored the sensitive topic of fertility and sexual data. The exhibition demonstrated how this very innocent-looking data can be collected and shared by third parties and the problems this can cause for girls and women. It was seen by 4,000 people during the three months it was displayed on the Royal Holloway Campus.

“We received overwhelmingly positive feedback on how the experience helped the public understand our research. The work was partially exhibited at the Victoria and Albert Museum in London and urban spaces in Australia.”

Online hate speech

The lab also worked on a major project that demonstrated online hate speech existing on the content platform 4chan, which was associated with one of the -funded projects in the lab, This work was linked to AGENCY (2022-25), one of the lab’s Engineering and Physical Sciences Research Council (EPSRC)-funded projects.

Using pre-trained large language models, the team analysed over half a million posts, and discovered that more than 11% of content on the platform contained hate speech targeting women, disabled people or people from a particular background. The resulting dataset was made open source, contributing to global understanding of online toxicity.

Why inclusive security matters

For Maryam, fostering a research culture that encourages finding opportunities to make a difference to the world is just as important as technical innovation. Through her lab and an online international mentoring programme she is building a sustainable pipeline of independent researchers who collaborate with communities who’ll most benefit from their work.

“I never believed I needed to shed my identity to be successful in academia. Instead of conforming to the image of a typical security researcher, I chose to lead with my lived experience – using it to drive meaningful change for others.”

Maryam actively encourages her team to also bring their personal experience and identity to their work. By using this diversity of interest to inform and drive their research, her team and their international collaborations are reshaping how cybersecurity is thought about. Their work is helping move technology beyond simply designing for the default user, creating systems and security that are accessible, inclusive and that work for everyone.

Explore more of Maryam's and her team's research on the USP lab's homepage.

USP Lab homepage

Return to our Research in Focus page to uncover more exciting research happening at Royal Holloway, University of London.

Research in Focus homepage